How strict should AI policies be within an organization? For CISOs, this question sits at the intersection of risk management, regulatory expectations, and business enablement. The objective is to establish guardrails that reduce security and compliance risk without creating unnecessary friction for teams that are experimenting with or deploying AI tools. Striking that balance requires clarity about risk tolerance, visibility into actual usage, and a realistic understanding of how employees adopt new technologies.

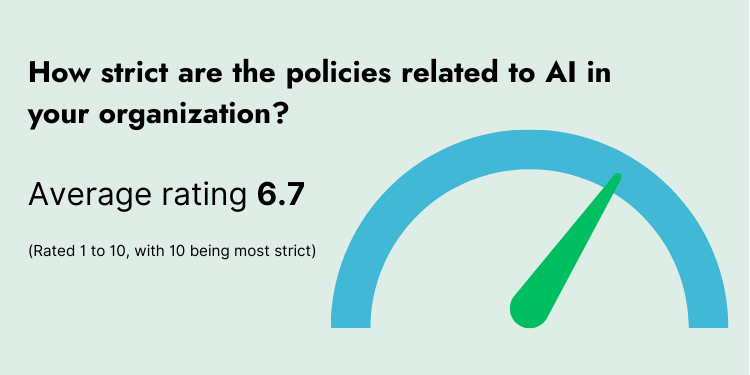

In the 2026 Cyber Security Tribe Annual Report, security leaders were asked: “How strict are the policies related to AI in your organization?” The results show an average AI policy strictness score of 6.7 out of 10, with 54% of respondents rating their policies at 7 or higher. That suggests most organizations are leaning toward meaningful control, but not outright prohibition. You can download the report here: https://www.cybersecuritytribe.com/annual-report

With these benchmarks in mind, the more important discussion is what level of strictness is workable today, and how CISOs can verify that day-to-day behavior aligns with formal policy. It also raises a related governance question: which AI use cases require strict preventive controls, and which can be addressed through monitoring, guidance, and after-the-fact enforcement.

How Strict Should the Policies be Relating to AI at Organizations?

With an average AI policy strictness score of 6.7 out of 10, and 54% rating strictness at 7 or higher, we should explore what level of strictness is workable today, and how to you check whether behavior matches the policy.

In my opinion, a strictness level of 7 should be the minimum to ensure consistent guardrails. However, it's crucial to strike a balance, as a level of 9 might hinder productivity and lead to frustration. My ideal minimum would be 7.5, but an 8.0 might be more suitable for enterprise environments, provided that 7.5 is proven moderate effectiveness.

Red teaming ensures the initial policy's effectiveness and pre-establishes an operational mechanism to regularly confirm its viability, as prompt injection and posture are constantly evolving.

How should CISOs decide which AI uses require strict prevention controls versus monitoring and after-the-fact enforcement?

The most effective approach involves three levels of consideration:

1. Risk-based Case Study: Evaluate the sensitivity of the data and determine the risk criticality in relation to business priorities. Assess the potential impact of misuse, which requires a thorough threat modeling analysis. Collaborate with the business to decide if the benefits outweigh the risks.

2. Control-Mode Decisioning (Prevent vs. Monitor): Determine if the consequences would be irreversible or catastrophic if only monitoring were in place. Identify cases that cannot rely solely on monitoring and consider those that are autonomously are harmful (e.g., Clawbot autonomous). Evaluate any regulatory implications of monitoring alone versus requiring preventative measures. Lastly, assess the business impact if preventative controls were either ineffective or absent, including potential regulatory consequences.

3. Ongoing Oversight & Tuning: Examine the tooling and its capabilities, the expertise of those overseeing the process, and the accountability of the oversight cross team that needs to involve. Involvement in data governance to ensure they have a stake in the outcomes, as these considerations extend beyond cybersecurity due to the potential impact on data.

To explore more into the subject we answer other such questions as "What policy areas matter most in real incidents?" and "What AI-related incident scenario do you think is most likely to catch organizations off guard in 2026?" in the Cyber Security Tribe State of the Industry Annual Report 2026 - which is available for you to download now.